梯度下降实验

# 前置数据

from mpl_toolkits.mplot3d import Axes3D | |

import matplotlib.pyplot as plt | |

from matplotlib import cm | |

import matplotlib.colors | |

from matplotlib import animation, rc | |

from IPython.display import HTML | |

import numpy as np | |

import math | |

#可视化选项 | |

animation_frames = 50 | |

w_min = -10 | |

w_max = 10 | |

b_min = -10 | |

b_max = 10 | |

#数据 | |

X = np.asarray([3.5, 0.35, 3.2, -2.0, 1.5, -0.5]) | |

Y = np.asarray([0.5, 0.50, 0.5, 0.5, 0.1, 0.3]) |

#优化器参数 | |

SGD_optimizer = {'name':'SGD','epoch':10000,'lr':0.1} | |

#补充优化器参数 | |

MiniBatch_optimizer = {'name':'MiniBatch','epoch':10000,'lr':1} | |

Momentum_optimizer = {'name':'Momentum','epoch':10000,'lr':1} | |

NAG_optimizer = {'name':'NAG','epoch':10000,'lr':1} | |

AdaGrad_optimizer = {'name':'AdaGrad','epoch':10000,'lr':0.1} | |

RMSProp_optimizer = {'name':'RMSProp','epoch':10000,'lr':0.1} | |

Adam_optimizer = {'name':'Adam','epoch':10000,'lr':0.1} |

# 优化器代码

#优化器,需补全各个实现代码,已有 SGD 例子 | |

class Tester: | |

def __init__(self, w_init=-6.0, b_init=4.0, optimizer=SGD_optimizer): | |

self.w = w_init | |

self.b = b_init | |

self.w_h = [] | |

self.b_h = [] | |

self.e_h = [] | |

self.optimizer = optimizer | |

#参数,设置如有需要 | |

#激活函数 | |

def sigmoid(self, x, w=None, b=None): | |

if w is None: | |

w = self.w | |

if b is None: | |

b = self.b | |

return 1. / (1. + np.exp(-(w*x + b))) | |

#计算误差 | |

def loss(self, X, Y, w=None, b=None): | |

if w is None: | |

w = self.w | |

if b is None: | |

b = self.b | |

cost = 0 | |

for x, y in zip(X, Y): | |

cost += 0.5 * (self.sigmoid(x, w, b) - y) ** 2 | |

return cost | |

def grad_w(self, x, y, w=None, b=None): | |

if w is None: | |

w = self.w | |

if b is None: | |

b = self.b | |

y_pred = self.sigmoid(x, w, b) | |

return (y_pred - y) * y_pred * (1 - y_pred) * x | |

def grad_b(self, x, y, w=None, b=None): | |

if w is None: | |

w = self.w | |

if b is None: | |

b = self.b | |

y_pred = self.sigmoid(x, w, b) | |

return (y_pred - y) * y_pred * (1 - y_pred) | |

def fit(self, X, Y): | |

self.w_h = [] | |

self.b_h = [] | |

self.e_h = [] | |

self.X = X | |

self.Y = Y | |

if self.optimizer['name'] == 'SGD': | |

for i in range(self.optimizer['epoch']): | |

dw, db = 0, 0 | |

for x, y in zip(X, Y): | |

dw += self.grad_w(x, y) | |

db += self.grad_b(x, y) | |

self.w -= self.optimizer['lr'] * dw | |

self.b -= self.optimizer['lr'] * db | |

#记录参数与误差历史用于可视化 | |

self.append_param_history() | |

elif self.optimizer['name'] == 'MiniBatch': | |

batch_size=2 | |

num_points_seen=0 | |

for i in range(self.optimizer['epoch']): | |

dw,db=0,0 | |

for x,y in zip(X,Y): | |

dw += self.grad_w(x, y) | |

db += self.grad_b(x, y) | |

num_points_seen+=1 | |

if num_points_seen % batch_size == 0: | |

self.w-=self.optimizer['lr']*dw | |

self.b-=self.optimizer['lr']*db | |

dw,db=0,0 | |

self.append_param_history() | |

elif self.optimizer['name'] == 'Momentum': | |

prev_v_w,prev_v_b,gamma=0,0,0.9 | |

for i in range(self.optimizer['epoch']): | |

dw,db=0,0 | |

for x,y in zip(X,Y): | |

dw += self.grad_w(x,y) | |

db += self.grad_b(x,y) | |

v_w = gamma*prev_v_w + self.optimizer['lr']*dw | |

v_b = gamma*prev_v_b + self.optimizer['lr']*db | |

self.w -= v_w | |

self.b -= v_b | |

prev_v_w = v_w | |

prev_v_b = v_b | |

self.append_param_history() | |

elif self.optimizer['name'] == 'NAG': | |

prev_v_w,prev_v_b,gamma=0,0,0.9 | |

for i in range(self.optimizer['epoch']): | |

dw,db=0,0 | |

v_w = gamma*prev_v_w | |

v_b = gamma*prev_v_b | |

for x,y in zip(X,Y): | |

dw += self.grad_w(x,y,self.w-v_w,self.b-v_b) | |

db += self.grad_b(x,y,self.w-v_w,self.b-v_b) | |

v_w=gamma*prev_v_w+self.optimizer['lr']*dw | |

v_b=gamma*prev_v_b+self.optimizer['lr']*db | |

self.w -= v_w | |

self.b -= v_b | |

prev_v_w = v_w | |

prev_v_b = v_b | |

self.append_param_history() | |

elif self.optimizer['name'] == 'AdaGrad': | |

v_w,v_b,eps=0,0,1e-8 | |

for i in range(self.optimizer['epoch']): | |

dw,db=0,0 | |

for x,y in zip(X,Y): | |

dw += self.grad_w(x,y) | |

db += self.grad_b(x,y) | |

v_w=v_w+dw**2 | |

v_b=v_b+db**2 | |

self.w=self.w-(self.optimizer['lr']/np.sqrt(v_w+eps))*dw | |

self.b=self.b-(self.optimizer['lr']/np.sqrt(v_b+eps))*db | |

self.append_param_history() | |

elif self.optimizer['name'] == 'RMSProp': | |

v_w,v_b,eps,beta1=0,0,1e-8,0.9 | |

for i in range(self.optimizer['epoch']): | |

dw,db=0,0 | |

for x,y in zip(X,Y): | |

dw+=self.grad_w(x,y) | |

db+=self.grad_b(x,y) | |

v_w=beta1*v_w+(1-beta1)*dw**2 | |

v_b=beta1*v_b+(1-beta1)*db**2 | |

self.w-=(self.optimizer['lr']/np.sqrt(v_w+eps))*dw | |

self.b-=(self.optimizer['lr']/np.sqrt(v_b+eps))*db | |

self.append_param_history() | |

elif self.optimizer['name'] == 'Adam': | |

m_w,m_b,v_w,v_b,m_w_hat,m_b_hat,v_w_hat,v_b_hat,eps,beta1,beta2=0,0,0,0,0,0,0,0,1e-8,0.9,0.999 | |

for i in range(self.optimizer['epoch']): | |

dw,db=0,0 | |

for x,y in zip(X,Y): | |

dw+=self.grad_w(x,y) | |

db+=self.grad_b(x,y) | |

m_w=beta1*m_w+(1-beta1)*dw | |

m_b=beta1*m_b+(1-beta1)*db | |

v_w=beta2*v_w+(1-beta2)*dw**2 | |

v_b=beta2*v_b+(1-beta2)*db**2 | |

m_w_hat=m_w/(1-math.pow(beta1,i+1)) | |

m_b_hat=m_b/(1-math.pow(beta1,i+1)) | |

v_w_hat=v_w/(1-math.pow(beta2,i+1)) | |

v_b_hat=v_b/(1-math.pow(beta2,i+1)) | |

self.w-=(self.optimizer['lr']/np.sqrt(v_w_hat+eps))*m_w_hat | |

self.b-=(self.optimizer['lr']/np.sqrt(v_b_hat+eps))*m_b_hat | |

self.append_param_history() | |

def append_param_history(self): | |

self.w_h.append(self.w) | |

self.b_h.append(self.b) | |

self.e_h.append(self.loss(self.X, self.Y)) |

# 误差曲线

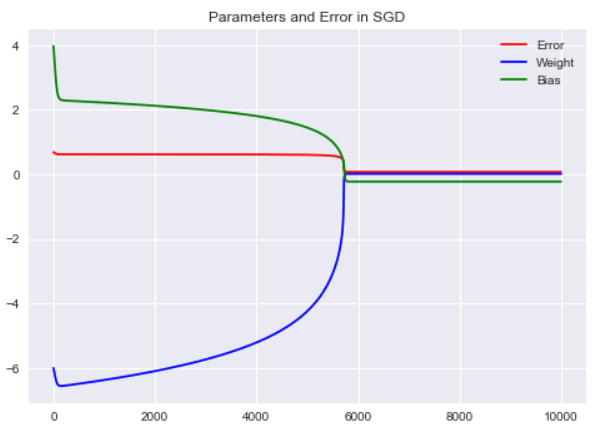

# SGD

Exp = Tester(optimizer=SGD_optimizer) | |

plt.style.use("seaborn") | |

Exp.fit(X, Y) | |

plt.plot(Exp.e_h, 'r') | |

plt.plot(Exp.w_h, 'b') | |

plt.plot(Exp.b_h, 'g') | |

plt.legend(["Error","Weight","Bias"]) | |

plt.title("Parameters and Error in SGD") | |

plt.show() |

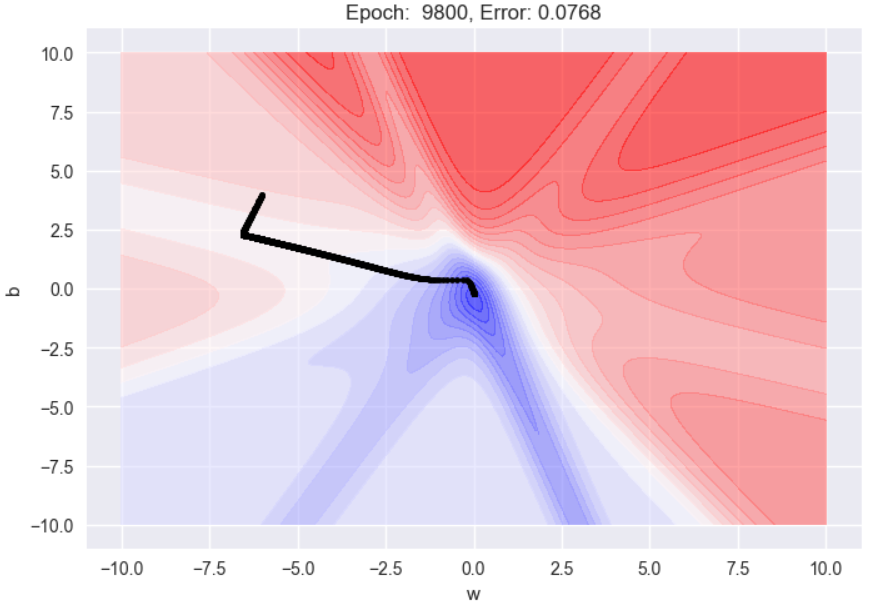

#SGD 参数可视化 | |

W = np.linspace(w_min, w_max, 256) | |

b = np.linspace(b_min, b_max, 256) | |

WW, BB = np.meshgrid(W, b) | |

Z = Exp.loss(X, Y, WW, BB) | |

def plot_animate_2d(i): | |

i = int(i*(SGD_optimizer['epoch']/animation_frames)) | |

line.set_data(Exp.w_h[:i+1], Exp.b_h[:i+1]) | |

title.set_text('Epoch: {: d}, Error: {:.4f}'.format(i, Exp.e_h[i])) | |

return line, title | |

fig = plt.figure(dpi=100) | |

ax = plt.subplot(111) | |

ax.set_xlabel('w') | |

ax.set_xlim(w_min - 1, w_max + 1) | |

ax.set_ylabel('b') | |

ax.set_ylim(b_min - 1, b_max + 1) | |

title = ax.set_title('Epoch 0') | |

cset = plt.contourf(WW, BB, Z, 25, alpha=0.6, cmap=cm.bwr) | |

i = 0 | |

line, = ax.plot(Exp.w_h[:i+1], Exp.b_h[:i+1], color='black',marker='.') | |

anim = animation.FuncAnimation(fig, func=plot_animate_2d, frames=animation_frames) | |

rc('animation', html='jshtml') | |

print('Optimizer = {}, Lr = {}'.format(SGD_optimizer['name'], SGD_optimizer['lr'])) | |

anim |

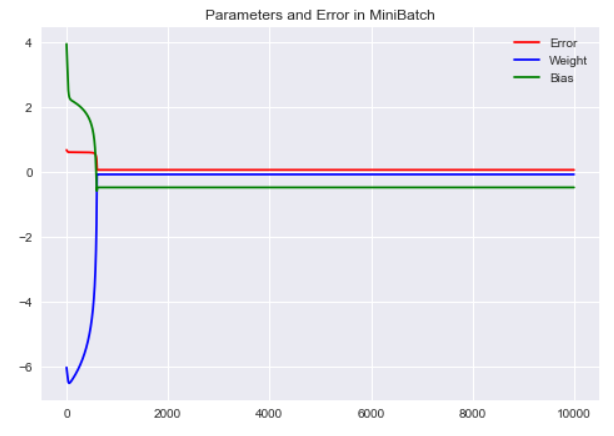

# MiniBatch

Exp = Tester(optimizer=MiniBatch_optimizer) | |

plt.style.use("seaborn") | |

Exp.fit(X, Y) | |

plt.plot(Exp.e_h, 'r') | |

plt.plot(Exp.w_h, 'b') | |

plt.plot(Exp.b_h, 'g') | |

plt.legend(["Error","Weight","Bias"]) | |

plt.title("Parameters and Error in MiniBatch") | |

plt.show() |

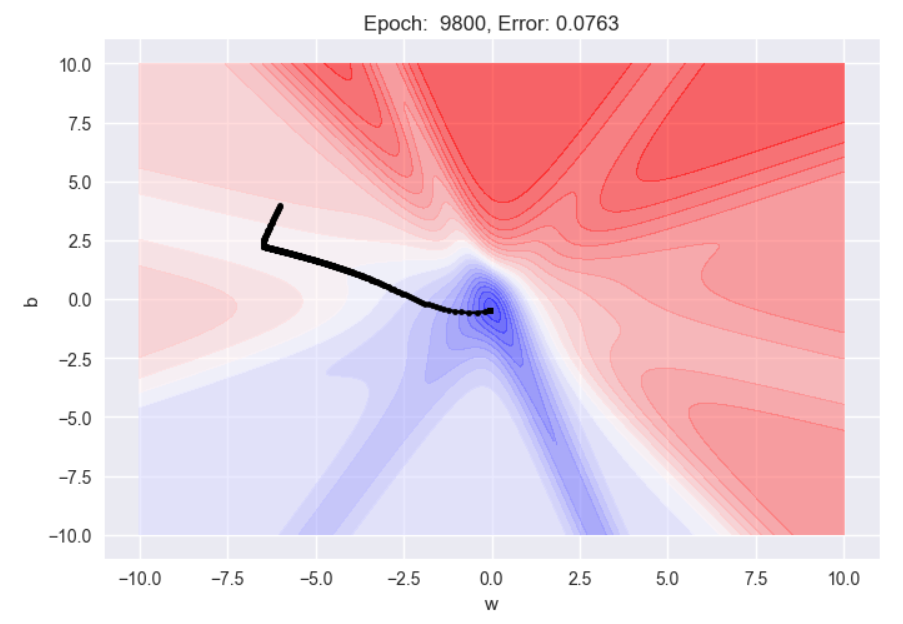

W = np.linspace(w_min, w_max, 256) | |

b = np.linspace(b_min, b_max, 256) | |

WW, BB = np.meshgrid(W, b) | |

Z = Exp.loss(X, Y, WW, BB) | |

def plot_animate_2d(i): | |

i = int(i*(MiniBatch_optimizer['epoch']/animation_frames)) | |

line.set_data(Exp.w_h[:i+1], Exp.b_h[:i+1]) | |

title.set_text('Epoch: {: d}, Error: {:.4f}'.format(i, Exp.e_h[i])) | |

return line, title | |

fig = plt.figure(dpi=100) | |

ax = plt.subplot(111) | |

ax.set_xlabel('w') | |

ax.set_xlim(w_min - 1, w_max + 1) | |

ax.set_ylabel('b') | |

ax.set_ylim(b_min - 1, b_max + 1) | |

title = ax.set_title('Epoch 0') | |

cset = plt.contourf(WW, BB, Z, 25, alpha=0.6, cmap=cm.bwr) | |

i = 0 | |

line, = ax.plot(Exp.w_h[:i+1], Exp.b_h[:i+1], color='black',marker='.') | |

anim = animation.FuncAnimation(fig, func=plot_animate_2d, frames=animation_frames) | |

rc('animation', html='jshtml') | |

print('Optimizer = {}, Lr = {}'.format(MiniBatch_optimizer['name'], MiniBatch_optimizer['lr'])) | |

anim |

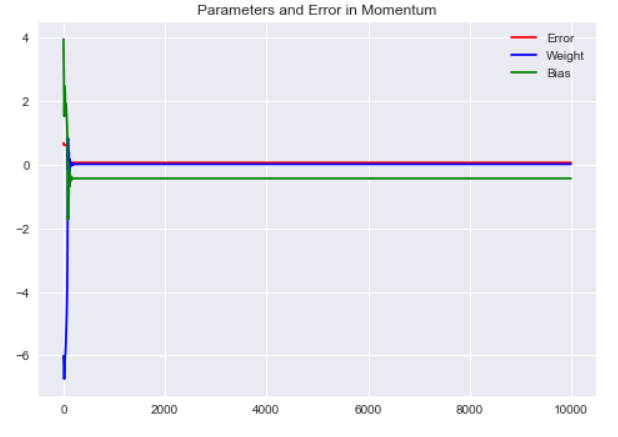

# Momentum

Exp = Tester(optimizer=Momentum_optimizer) | |

plt.style.use("seaborn") | |

Exp.fit(X, Y) | |

plt.plot(Exp.e_h, 'r') | |

plt.plot(Exp.w_h, 'b') | |

plt.plot(Exp.b_h, 'g') | |

plt.legend(["Error","Weight","Bias"]) | |

plt.title("Parameters and Error in Momentum") | |

plt.show() |

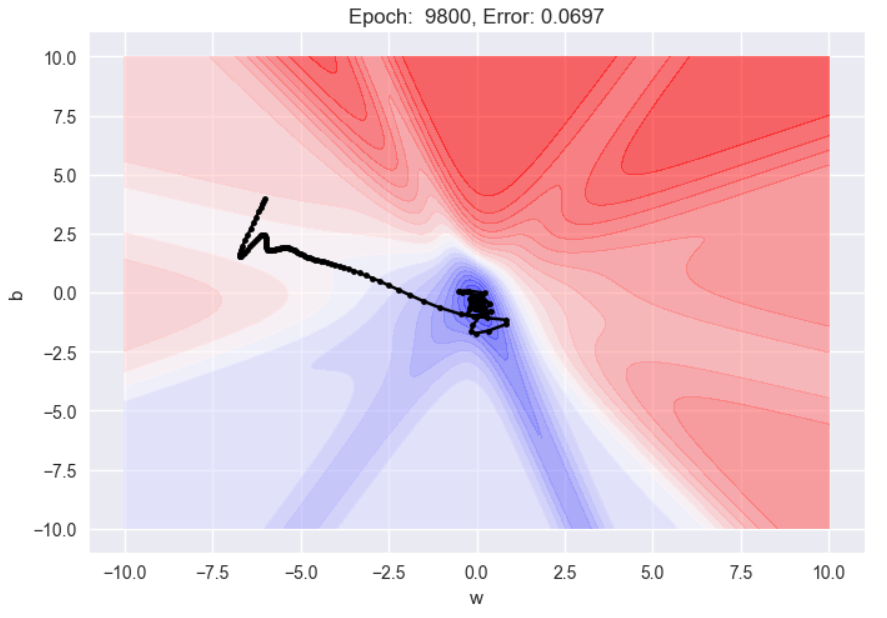

W = np.linspace(w_min, w_max, 256) | |

b = np.linspace(b_min, b_max, 256) | |

WW, BB = np.meshgrid(W, b) | |

Z = Exp.loss(X, Y, WW, BB) | |

def plot_animate_2d(i): | |

i = int(i*(Momentum_optimizer['epoch']/animation_frames)) | |

line.set_data(Exp.w_h[:i+1], Exp.b_h[:i+1]) | |

title.set_text('Epoch: {: d}, Error: {:.4f}'.format(i, Exp.e_h[i])) | |

return line, title | |

fig = plt.figure(dpi=100) | |

ax = plt.subplot(111) | |

ax.set_xlabel('w') | |

ax.set_xlim(w_min - 1, w_max + 1) | |

ax.set_ylabel('b') | |

ax.set_ylim(b_min - 1, b_max + 1) | |

title = ax.set_title('Epoch 0') | |

cset = plt.contourf(WW, BB, Z, 25, alpha=0.6, cmap=cm.bwr) | |

i = 0 | |

line, = ax.plot(Exp.w_h[:i+1], Exp.b_h[:i+1], color='black',marker='.') | |

anim = animation.FuncAnimation(fig, func=plot_animate_2d, frames=animation_frames) | |

rc('animation', html='jshtml') | |

print('Optimizer = {}, Lr = {}'.format(Momentum_optimizer['name'], Momentum_optimizer['lr'])) | |

anim |

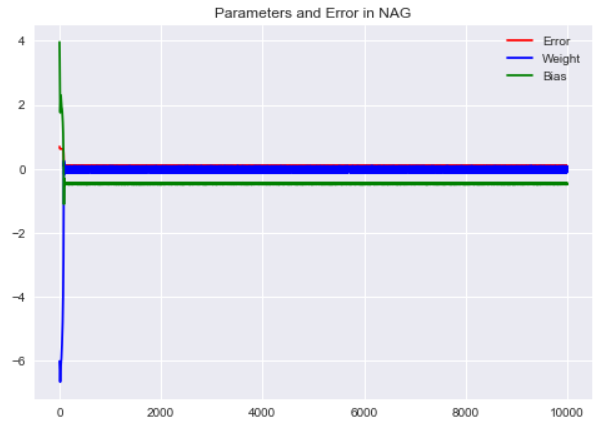

# NAGD

Exp = Tester(optimizer=NAG_optimizer) | |

plt.style.use("seaborn") | |

Exp.fit(X, Y) | |

plt.plot(Exp.e_h, 'r') | |

plt.plot(Exp.w_h, 'b') | |

plt.plot(Exp.b_h, 'g') | |

plt.legend(["Error","Weight","Bias"]) | |

plt.title("Parameters and Error in NAG") | |

plt.show() |

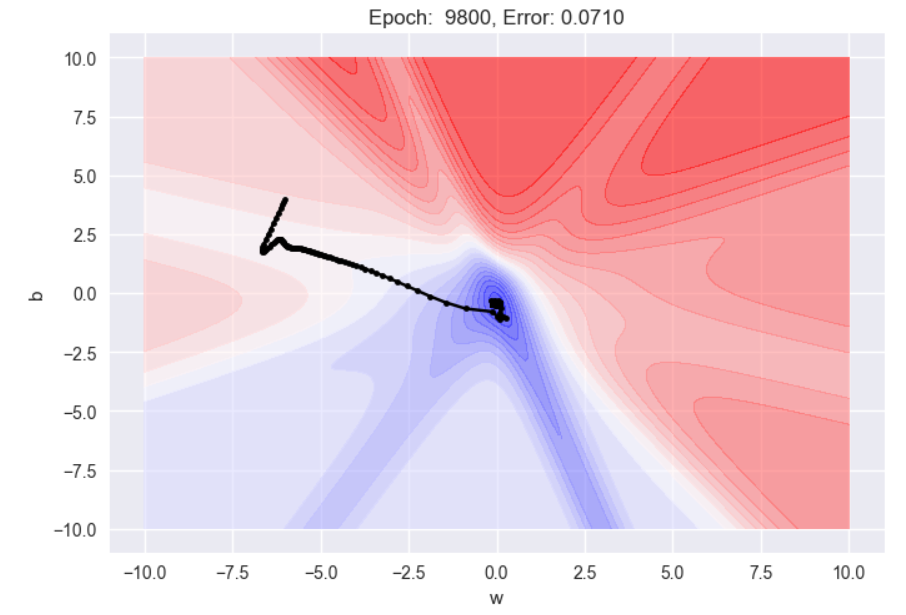

W = np.linspace(w_min, w_max, 256) | |

b = np.linspace(b_min, b_max, 256) | |

WW, BB = np.meshgrid(W, b) | |

Z = Exp.loss(X, Y, WW, BB) | |

def plot_animate_2d(i): | |

i = int(i*(NAG_optimizer['epoch']/animation_frames)) | |

line.set_data(Exp.w_h[:i+1], Exp.b_h[:i+1]) | |

title.set_text('Epoch: {: d}, Error: {:.4f}'.format(i, Exp.e_h[i])) | |

return line, title | |

fig = plt.figure(dpi=100) | |

ax = plt.subplot(111) | |

ax.set_xlabel('w') | |

ax.set_xlim(w_min - 1, w_max + 1) | |

ax.set_ylabel('b') | |

ax.set_ylim(b_min - 1, b_max + 1) | |

title = ax.set_title('Epoch 0') | |

cset = plt.contourf(WW, BB, Z, 25, alpha=0.6, cmap=cm.bwr) | |

i = 0 | |

line, = ax.plot(Exp.w_h[:i+1], Exp.b_h[:i+1], color='black',marker='.') | |

anim = animation.FuncAnimation(fig, func=plot_animate_2d, frames=animation_frames) | |

rc('animation', html='jshtml') | |

print('Optimizer = {}, Lr = {}'.format(NAG_optimizer['name'], NAG_optimizer['lr'])) | |

anim |

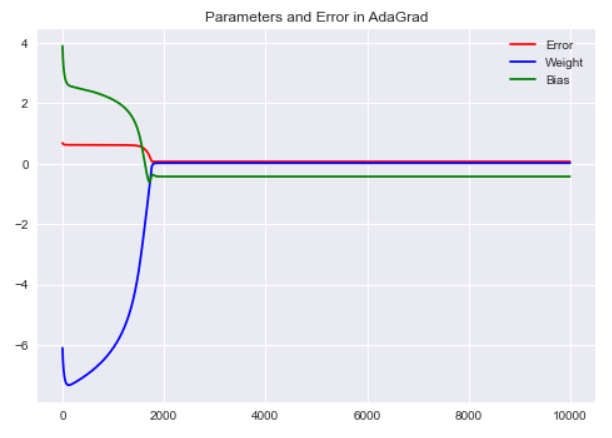

# AdaGrad

Exp = Tester(optimizer=AdaGrad_optimizer) | |

plt.style.use("seaborn") | |

Exp.fit(X, Y) | |

plt.plot(Exp.e_h, 'r') | |

plt.plot(Exp.w_h, 'b') | |

plt.plot(Exp.b_h, 'g') | |

plt.legend(["Error","Weight","Bias"]) | |

plt.title("Parameters and Error in AdaGrad") | |

plt.show() |

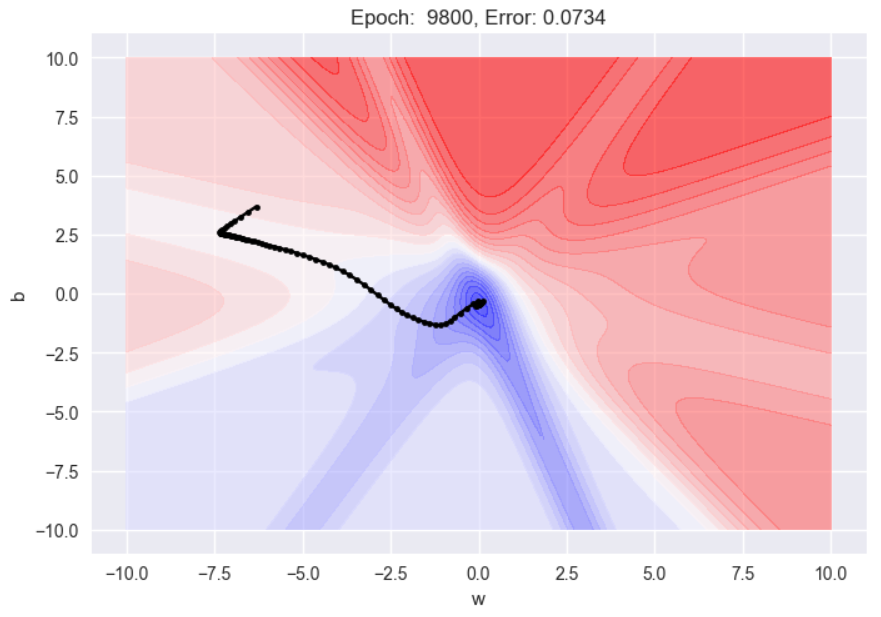

W = np.linspace(w_min, w_max, 256) | |

b = np.linspace(b_min, b_max, 256) | |

WW, BB = np.meshgrid(W, b) | |

Z = Exp.loss(X, Y, WW, BB) | |

def plot_animate_2d(i): | |

i = int(i*(AdaGrad_optimizer['epoch']/animation_frames)) | |

line.set_data(Exp.w_h[:i+1], Exp.b_h[:i+1]) | |

title.set_text('Epoch: {: d}, Error: {:.4f}'.format(i, Exp.e_h[i])) | |

return line, title | |

fig = plt.figure(dpi=100) | |

ax = plt.subplot(111) | |

ax.set_xlabel('w') | |

ax.set_xlim(w_min - 1, w_max + 1) | |

ax.set_ylabel('b') | |

ax.set_ylim(b_min - 1, b_max + 1) | |

title = ax.set_title('Epoch 0') | |

cset = plt.contourf(WW, BB, Z, 25, alpha=0.6, cmap=cm.bwr) | |

i = 0 | |

line, = ax.plot(Exp.w_h[:i+1], Exp.b_h[:i+1], color='black',marker='.') | |

anim = animation.FuncAnimation(fig, func=plot_animate_2d, frames=animation_frames) | |

rc('animation', html='jshtml') | |

print('Optimizer = {}, Lr = {}'.format(AdaGrad_optimizer['name'], AdaGrad_optimizer['lr'])) | |

anim |

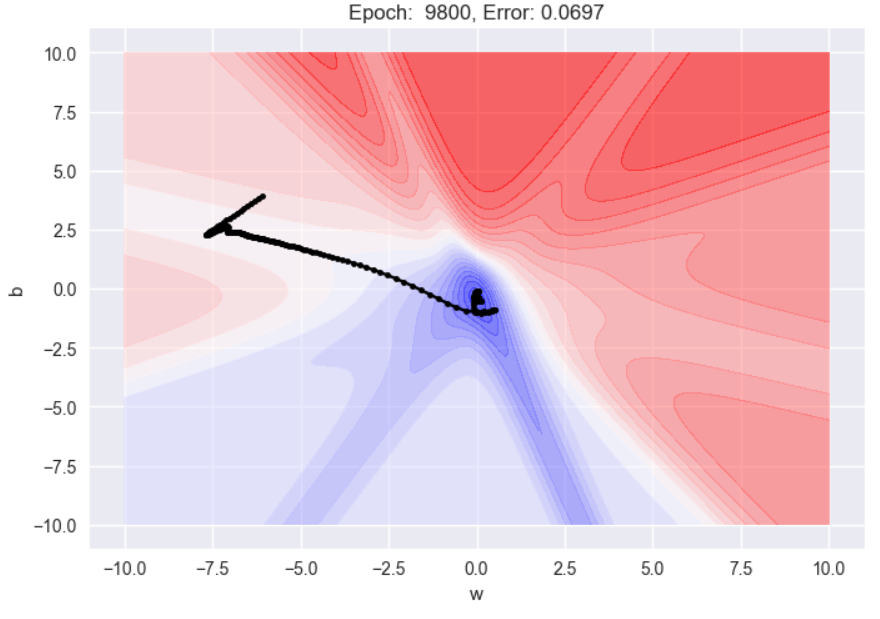

# RMSProp

Exp = Tester(optimizer=RMSProp_optimizer) | |

plt.style.use("seaborn") | |

Exp.fit(X, Y) | |

plt.plot(Exp.e_h, 'r') | |

plt.plot(Exp.w_h, 'b') | |

plt.plot(Exp.b_h, 'g') | |

plt.legend(["Error","Weight","Bias"]) | |

plt.title("Parameters and Error in RMSProp") | |

plt.show() |

W = np.linspace(w_min, w_max, 256) | |

b = np.linspace(b_min, b_max, 256) | |

WW, BB = np.meshgrid(W, b) | |

Z = Exp.loss(X, Y, WW, BB) | |

def plot_animate_2d(i): | |

i = int(i*(RMSProp_optimizer['epoch']/animation_frames)) | |

line.set_data(Exp.w_h[:i+1], Exp.b_h[:i+1]) | |

title.set_text('Epoch: {: d}, Error: {:.4f}'.format(i, Exp.e_h[i])) | |

return line, title | |

fig = plt.figure(dpi=100) | |

ax = plt.subplot(111) | |

ax.set_xlabel('w') | |

ax.set_xlim(w_min - 1, w_max + 1) | |

ax.set_ylabel('b') | |

ax.set_ylim(b_min - 1, b_max + 1) | |

title = ax.set_title('Epoch 0') | |

cset = plt.contourf(WW, BB, Z, 25, alpha=0.6, cmap=cm.bwr) | |

i = 0 | |

line, = ax.plot(Exp.w_h[:i+1], Exp.b_h[:i+1], color='black',marker='.') | |

anim = animation.FuncAnimation(fig, func=plot_animate_2d, frames=animation_frames) | |

rc('animation', html='jshtml') | |

print('Optimizer = {}, Lr = {}'.format(RMSProp_optimizer['name'], RMSProp_optimizer['lr'])) | |

anim |

# Adam

Exp = Tester(optimizer=Adam_optimizer) | |

plt.style.use("seaborn") | |

Exp.fit(X, Y) | |

plt.plot(Exp.e_h, 'r') | |

plt.plot(Exp.w_h, 'b') | |

plt.plot(Exp.b_h, 'g') | |

plt.legend(["Error","Weight","Bias"]) | |

plt.title("Parameters and Error in Adam") | |

plt.show() |

W = np.linspace(w_min, w_max, 256) | |

b = np.linspace(b_min, b_max, 256) | |

WW, BB = np.meshgrid(W, b) | |

Z = Exp.loss(X, Y, WW, BB) | |

def plot_animate_2d(i): | |

i = int(i*(Adam_optimizer['epoch']/animation_frames)) | |

line.set_data(Exp.w_h[:i+1], Exp.b_h[:i+1]) | |

title.set_text('Epoch: {: d}, Error: {:.4f}'.format(i, Exp.e_h[i])) | |

return line, title | |

fig = plt.figure(dpi=100) | |

ax = plt.subplot(111) | |

ax.set_xlabel('w') | |

ax.set_xlim(w_min - 1, w_max + 1) | |

ax.set_ylabel('b') | |

ax.set_ylim(b_min - 1, b_max + 1) | |

title = ax.set_title('Epoch 0') | |

cset = plt.contourf(WW, BB, Z, 25, alpha=0.6, cmap=cm.bwr) | |

i = 0 | |

line, = ax.plot(Exp.w_h[:i+1], Exp.b_h[:i+1], color='black',marker='.') | |

anim = animation.FuncAnimation(fig, func=plot_animate_2d, frames=animation_frames) | |

rc('animation', html='jshtml') | |

print('Optimizer = {}, Lr = {}'.format(Adam_optimizer['name'], Adam_optimizer['lr'])) | |

anim |

全部完成,每个优化器生成的还有动态路径图。